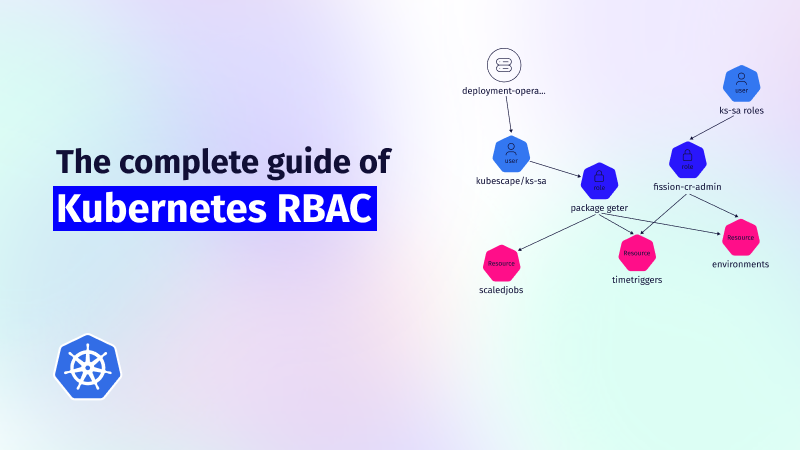

Kubernetes RBAC: Deep Dive into Security and Best Practices

This guide explores the challenges of RBAC implementation, best practices for managing RBAC in Kubernetes,...

Nov 29, 2023

Kubernetes is a container orchestration tool that helps with the deployment and management of containers.

Within Kubernetes, a container runs logically in a pod, which can be represented as one instance of a running service. Pods are ephemeral and not self-healing, which makes them fragile. They can go down when an interruption occurs on the server, during a brief network problem, or due to a minimal memory issue—and it can bring down your entire application with it. Kubernetes deployments help to prevent this downtime.

In a deployment, you can describe the desired state for your application and Kubernetes will constantly check if this state is matched. A deployment will create ReplicaSets which then ensures that the desired number of pods are running. If a pod goes down due to an interruption, the ReplicaSets controller will notice that the desired state does not match the actual state, and a new pod will be created.

Deployments offer:

There are two ways to create a Kubernetes deployment.

While the imperative method can be quicker initially, it definitely has some drawbacks. It’s hard to see what’s changed when you’re managing deployments using imperative commands.

The declarative method, on the other hand, is self-documenting. Every configuration is in a file and this file can be managed in Git. Many developers can work on the same deployments and have a clear history of who changed what. Following the declarative method will make it possible to use GitOps principles, where all configurations in Git are used as the only source of truth.

As an added benefit, a later transition to popular tools like Helm and ArgoCD will go smoothly.

Now, let’s create a basic K8s deployment showing both methods:

Imperative:

$ kubectl create deployment nginx-deployment --image nginx --port=80

Declarative (deployment.yaml):

apiVersion: apps/v1 kind: Deployment metadata: name: nginx-deployment spec: selector: matchLabels: app: nginx-deployment replicas: 1 template: metadata: labels: app: nginx-deployment spec: containers: - name: nginx image: nginx:latest ports: - containerPort: 80

Verify the deployment:

$ kubectl apply -f deployment.yaml

Apply the template:

$ kubectl get deployment nginx-deployment NAME READY UP-TO-DATE AVAILABLE AGE nginx-deployment 1/1 1 1 99s

Describe your deployment:

$ kubectl describe deployment nginx-deployment

Let’s check the ReplicaSet created by the deployment:

$ kubectl get rs NAME DESIRED CURRENT READY AGE nginx-deployment-7c9cfd4dc7 1 1 1 2m5s

Now we’ll scale the deployment to two pods. To follow the declarative method, just update the YAML file and re-apply. The imperative method requires the following command:

$ kubectl scale deployment nginx-deployment --replicas=2 We can do some basic testing by deleting a pod. $ kubectl get pods -l app=nginx-deployment NAME READY STATUS RESTARTS AGE nginx-deployment-7c9cfd4dc7-c64nm 1/1 Running 0 3m45s nginx-deployment-7c9cfd4dc7-h529d 1/1 Running 0 21s

Delete a pod:

$ kubectl delete pod nginx-deployment-7c9cfd4dc7-c64nm pod "nginx-deployment-7c9cfd4dc7-c64nm" deleted

Check your pods again. You’ll see that a new pod was created to match the actual state with our desired state.

$ kubectl get pods kubectl get pods -l app=nginx-deployment NAME READY STATUS RESTARTS AGE nginx-deployment-7c9cfd4dc7-h529d 1/1 Running 0 58s nginx-deployment-7c9cfd4dc7-t78hk 1/1 Running 0 3s

You can make your deployment work in multiple ways:

When you describe your deployment, you can see the default update type:

$ kubectl describe deployment nginx-deployment … StrategyType: RollingUpdate …

Test the RollingUpdate by scaling the deployment to 10 replicas and redeploy another version:

$ kubectl scale deployment nginx-deployment --replicas=10

Update the image of your deployment:

$ kubectl set image deployment.v1.apps/nginx-deployment nginx=nginx:1.14

Check the status of your deployment:

$ kubectl rollout status deployment/nginx-deployment Waiting for deployment "nginx-deployment" rollout to finish: 5 out of 10 new replicas have been updated… Waiting for deployment "nginx-deployment" rollout to finish: 6 out of 10 new replicas have been updated… Waiting for deployment "nginx-deployment" rollout to finish: 7 out of 10 new replicas have been updated…

As you can see, the deployment is working as a rolling deployment by default.

If a bug is introduced in the latest version of your application, you’ll want to roll back as quickly as possible.

Check the revisions of your deployment:

$ kubectl rollout history deployment/nginx-deployment deployment.apps/nginx-deployment REVISION CHANGE-CAUSE 1 <none> 2 <none>

The following command will roll back to the previous version:

$ kubectl rollout undo deployment/nginx-deployment

Or to a specific version.

$ kubectl rollout undo deployment/nginx-deployment --to-revision=1

We did a rollback of our deployment, and now our image is nginx:latest again.

Deployments ensure your applications remain available by keeping the desired number of pods running and replacing unhealthy pods with new ones.

Knowing that a pod has an IP that can change when it’s replaced with a new pod, you can anticipate the IP of your application changing frequently. If you have multiple pods running your application, you’ll have multiple frequently changing IPs for your application. This makes it challenging to establish stable communication between the outside world and your application, and between multiple applications inside your cluster.

A Kubernetes service is the solution to this problem. A service consists of a set of iptables rules within your cluster that turn it into a virtual component. Because it doesn’t consume memory, and it’s not a running instance, it can’t go down. A service can expose a Kubernetes deployment by offering a static IP in front of it, and instead of talking to the pods, you would talk to the service, which then routes the traffic to the pods. The internal DNS in a Kubernetes cluster even makes it possible to talk to a service using an FQDN instead of an IP.

Finally, it doesn’t matter on which node a pod is deployed, the service will choose a pod using the round-robin algorithm.

Kubernetes offers three types of services.

ClusterIP is the default service type and creates an internal service in front of your deployment. That’s it. The service isn’t accessible from outside the cluster. This type of service is recommended for establishing intra-cluster communication between applications.

For example, when a frontend service needs to talk to a backend service, the backend should not be accessible from outside the cluster. In the frontend, you can define the FQDN and the port of the backend service to establish a secure connection.

The NodePort service type can be used to expose a service to the public without a load balancer. This is often used during testing, or with a local cluster like minikube. The service exposes the deployment and creates a route to each node in the Kubernetes cluster, automatically picking a port in the 30000-32768 range.

Now people outside the cluster can visit the application by visiting node1-ip:port or node2-ip:port, and so on. This is not the best way to expose a deployment to the world, because it means having a lot of open ports on your nodes when you want to expose multiple applications. But worse than that, you will lose the advantage of high availability.

When an application outside of your cluster needs to talk to an application running in your cluster, you need to configure a connection to one of the nodes (remember: node1-ip:port). If node1 goes down, this means that the application is no longer reachable, though deployment will ensure that the desired number of pods are recreated on different nodes. Those pods are reachable from the service, but the node is still creating a bottleneck.

Creating a load balancer in front of your nodes solves the problems noted above, and it brings back the benefit of having a highly available setup. Furthermore, only the load balancer would need to connect to your nodes, eliminating the need to open ports on your node to the public.

The LoadBalancer service type is the recommended solution to expose a Kubernetes deployment because it creates a load balancer in front of your nodes and routes the traffic to them. If a node goes down, it will route it to a different node. From there, the internal service will route the traffic to a pod. Though it’s important to note, this pod should not be deployed on the node that received the traffic from the load balancer.

Ingress will allow you to reuse the same load balancer for multiple services.

Now we’ll create a Kubernetes service using our deployment from the previous section.

To create a ClusterIP service (default), use the following command:

$ kubectl expose deployment nginx-deployment --name my-nginx-service --port 8080 --target-port=80

Or by using YAML:

apiVersion: v1 kind: Service metadata: name: my-nginx-service spec: selector: app: nginx-deployment ports: - protocol: TCP port: 8080 targetPort: 80

This service is not accessible from the outside. The service maps the service IP 10.96.86.203:8080 to our pods on port 80.

$ kubectl get svc -l app=nginx-deployment NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE my-nginx-service ClusterIP 10.96.86.2038080/TCP 87s

Now we’ll create a NodePort service that automatically chooses a port in the 30000-32768 range. The —target port is your container’s exposed port, the –port is the one you want to expose for your service.

$ kubectl expose deployment nginx-deployment --name my-nginx-service --port 8080 --target-port=80 --type NodePort

Check our service of type NodePort:

$ kubectl get svc -l app=nginx-deployment my-nginx-service NodePort 10.105.154.858080:30522/TCP 4s

Check the IP of one of your nodes in the cluster:

$ kubectl get nodes -o wide .. VERSION INTERNAL-IP .. .. v1.22.3 192.168.49.2 ..

Now, access the service from your node. Don’t forget to update your firewall and open the port on your node:

$ curl 192.168.49.2:30522 <!DOCTYPE html> … <body> <h1>Welcome to nginx!</h1> <p>If you see this page, the nginx web server is successfully … </body> </html>

The traffic will be routed from our node on port 30522 to the internal service (10.105.154.85:8080), and then from the service to our container (port 80).

Finally, we’ll create the LoadBalancer service. The easiest way to make use of this service type is by hosting your Kubernetes cluster in the cloud. The most popular solutions are EKS (AWS), AKS (Azure), and GKE (GCP). Creating a LoadBalancer service will provision a load balancer managed by your cloud provider and make your Kubernetes cluster cloud independent, and migrating your cluster between cloud providers shouldn’t be too hard.

Now we’ll create the LoadBalancer service:

Now that a LoadBalancer service has been created, your cloud provider (AWS in this case) will create a load balancer for you.

my-nginx-service LoadBalancer 10.100.17.58 xxx-yyy.eu-west-1.elb.amazonaws.com 8080:30985/TCP 21s

Each load balancer has its own DNS and target port, and you need a combination of these two properties to make a valid curl. A curl is a way to access the URL:PORT of the load balancer. It will then proxy it to the NodePort of the service and it will be routed to the pods.

curl http://xxx-yyy.eu-west-1.elb.amazonaws.com:8080 <!DOCTYPE html> … <body> <h1>Welcome to nginx!</h1> <p>If you see this page, the nginx web server is successfully … </body> </html>

The Load Balancer is also visible from within your cloud environment.

$ aws elb describe-load-balancers

By using a load balancer, we’ve created two layers of high availability. The load balancer will route to healthy nodes, and, thanks to the ReplicaSet controller, our Kubernetes deployments will make sure a pod is running on a healthy node. An unhealthy node or an unhealthy pod won’t impact your application’s availability if the deployment and service are set up correctly.

Security is a paramount concern in Kubernetes cluster management. As organizations increasingly rely on Kubernetes to deploy and manage their applications, ensuring the security of containerized workloads becomes a critical aspect of this technology’s adoption. Kubernetes offers several built-in security features, such as Role-Based Access Control (RBAC), network policies, and secure container runtimes. It is essential to configure these security features to protect the cluster from potential threats and unauthorized access. Additionally, third-party security tools and best practices play a vital role in enhancing the overall security posture of a Kubernetes cluster. ARMO Platform, for instance, is one such tool that not only provides comprehensive security assessments but also streamlines the installation of security measures, ensuring that your Kubernetes cluster remains resilient against evolving cybersecurity challenges.

As we’ve shown, by using Kubernetes deployments we can guarantee the high availability of our pods. In combination with using Kubernetes services—which can guarantee the high availability of the nodes in your cluster—it’s a resilient solution to make your applications hosted in Kubernetes more robust.

This guide explores the challenges of RBAC implementation, best practices for managing RBAC in Kubernetes,...

Role-Based Access Control (RBAC) is important for managing permissions in Kubernetes environments, ensuring that users...

In the dynamic world of Kubernetes, container orchestration is just the tip of the iceberg....